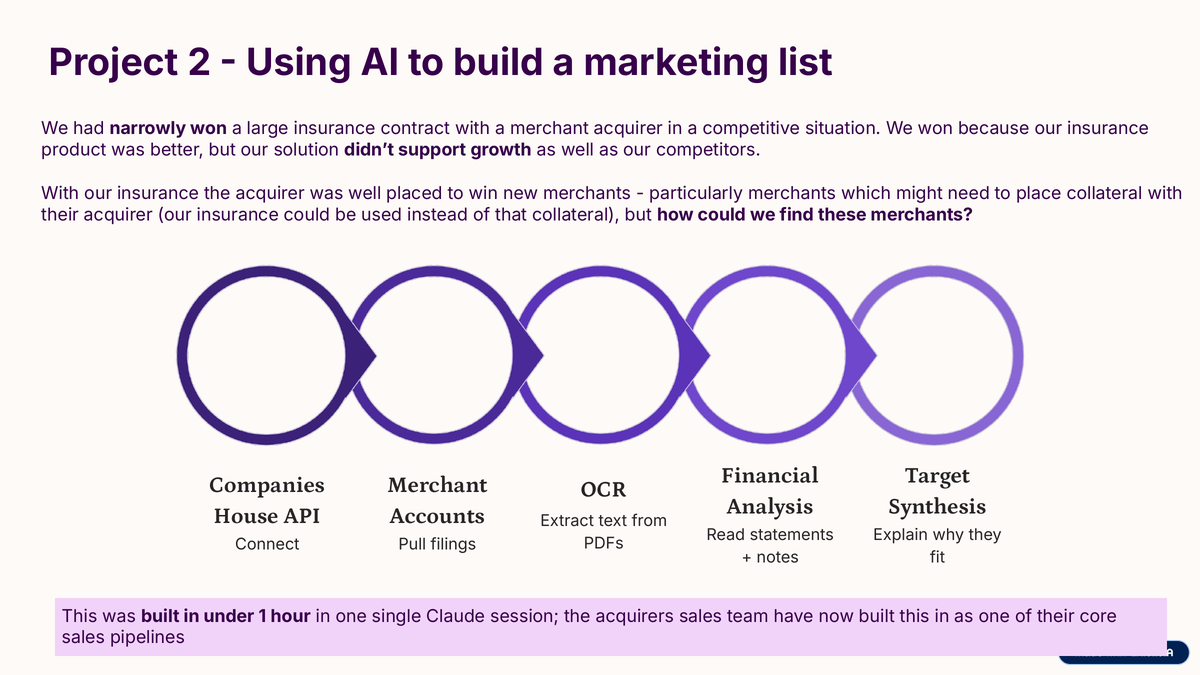

Someone asked me to build a marketing list. The ask was the kind that usually produces a multi-week scoping exercise: a list of companies of a specific shape, filtered by a handful of financial and operational characteristics, pulling from sources that did not share a schema. The sort of thing that an analyst spends two days on and produces an approximately-right spreadsheet from. I had an hour.

The list had to satisfy a few constraints. Companies in a specific sector. Revenue band within a defined range. Filed accounts recent enough to be informative. A set of signals extracted from their public materials (trading statements, annual reports) that could only be parsed with optical character recognition (OCR), because the filings were scanned PDFs rather than structured data. Cross-referenced against merchant-processing records to verify they were actively trading, not dormant. Sorted and ranked by fit to an explicit profile.

Four sources, three formats, one sorting rule. In a previous world I would have described this to an analyst, watched them work on it for a couple of days, and received a spreadsheet that was mostly right. In the new world I opened a Claude Code session and talked it through what I wanted.

What the session did

In one session, with me describing what I wanted and watching the intermediate outputs as they happened, the following got built.

A small script that queried the Companies House Application Programming Interface (API) for companies in the right sector, size band, and filing status. Companies House has a public API, the search parameters are well-documented, and Claude wrote the query, the pagination loop, and the output parser in about six minutes. The output was a list of around two thousand candidates with their company numbers and registered addresses.

A second script that took the candidate list and, for each company, pulled the most recent filed accounts from Companies House. The accounts come as PDFs, some of which are structured text and some of which are scanned images. The script used one of the standard Python optical character recognition (OCR) libraries to extract text from the scanned ones, and a PDF-to-text library for the structured ones. The extracted text went into a per-company folder.

A third script that used Claude to read the extracted filings and pull out the specific financial and operational signals I needed. This was the same pattern as AutoAgent: structured prompt, bounded output schema, one call per company, parallel over the list. The output was a table with one row per company and one column per signal.

A fourth script that cross-referenced the list against the merchant database, keeping only companies with active trading in the last three months.

A final script that scored each remaining company against the target profile using a simple weighted sum and sorted the output. That became the marketing list.

Total wall time from first prompt to delivered spreadsheet: under an hour. I spent most of that hour reading the intermediate outputs to catch errors, not writing code.

What this was not

It was not a production pipeline. There was no test coverage, no error handling beyond the minimum, no logging, no retry logic. It was a scaffold. If the business wanted to run this weekly, an engineer would need to harden it. That would take time, but the insight of whether the idea worked was already in the output.

It also was not a replacement for judgement. The marketing list went to someone who knew the space, who read through the top results, rejected some for reasons the signals did not capture, and promoted some that the ranking had missed. The pipeline produced a shortlist that was better than random and better than a naive keyword search. It did not produce the final answer. It produced a starting point for someone whose time was previously spent on the cold search rather than the warm read.

The small insight

The pipeline crossed four things that were previously separate. The companies registry. The PDF filings. The payment-processing records. The target profile. Each of those is straightforward on its own and each of them has a data team somewhere that could work on it in isolation. What took an hour in this case would have taken considerably longer to coordinate through the usual channels, not because any one piece is hard, but because the cost of crossing boundaries between data sources is usually greater than the cost of the work inside each source.

Agents lower that crossing cost to almost zero. The same session that wrote the Companies House call wrote the OCR extraction, the Claude prompting, the merchant cross-reference, and the ranking. No handover, no specification, no version mismatch. The system thought in features and fetched whatever it needed, wherever it was.

That is not a claim that everything should be built this way. Infrastructure that runs continuously still needs proper engineering, and the scripts I produced in that hour would not hold up under that demand. But for bounded, one-shot analytical work of this shape, the old process is now the slow process. The instinct to scope, specify, and delegate that work is correct only if the work is going to be run again. For a single marketing list, it is not.

The person who asked for the list had it that afternoon. The pipeline is a folder of five files on my laptop that I have not touched since. That is fine. It did what it needed to do.

Sources and schema are deliberately generic. The Companies House API is public, OCR libraries are open, and the pipeline pattern is reproducible by anyone with a similar question and an afternoon.