Everything on this site started with a hackathon on 11 February 2026. One day. One internal event. Build something with artificial intelligence (AI) that would be useful to the business. I was Chief Risk Officer (CRO) at the time, not a developer, and I had no intention of writing code. By the end of the day I had written a pipeline that crawled 30,000 merchant websites and scored them for fraud indicators. I did not write a single line of it by hand.

The problem was not new. I had been thinking about it for most of the previous year. Merchant-risk analysts manually review websites as part of onboarding and ongoing monitoring. A good analyst can read a website and form a judgement in about twenty minutes: is this a real business, does the catalogue make sense, are the compliance pages real or boilerplate, does the contact address look like a corporate domain or a free email provider. The work is skilled and it does not scale. A portfolio of tens of thousands of merchants needs a model, not a person.

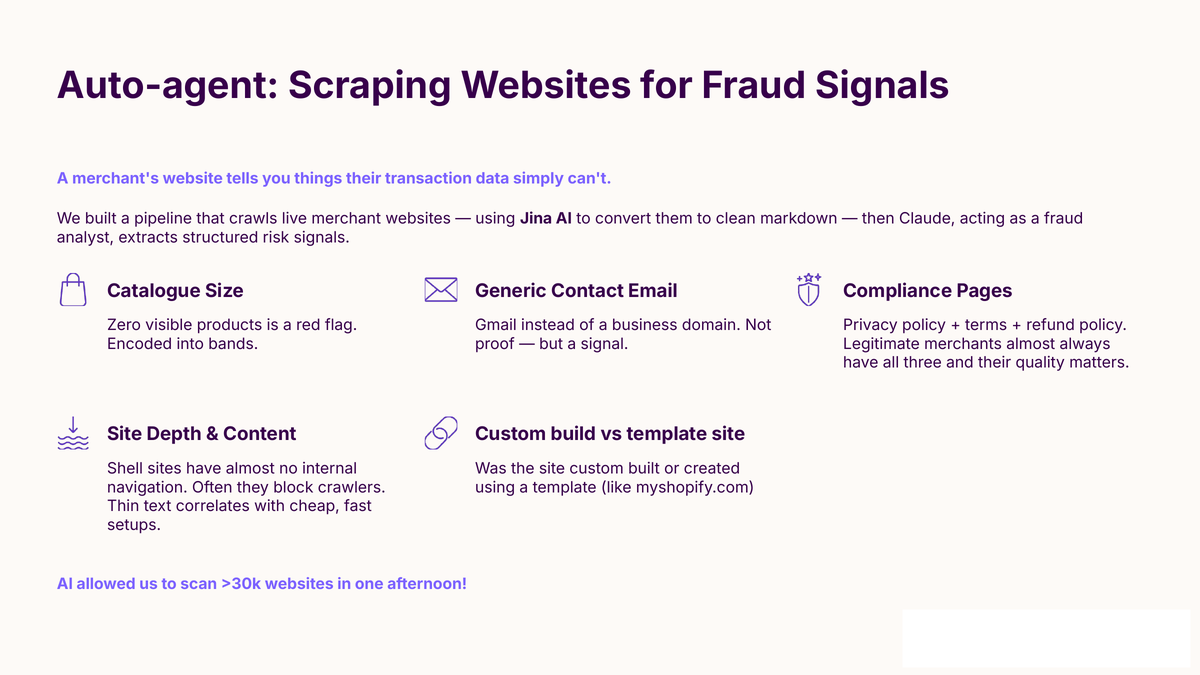

We had already done the obvious things. Transactional signals, domain intelligence, external risk scores from the usual vendors. Those are useful but they miss the specific thing a human reviewer catches, which is that the website itself is often the tell. A merchant claiming to be a £5m-a-year fashion retailer with a Shopify theme, three SKUs, a Gmail contact address, and a “terms and conditions” page copied verbatim from a template is rarely a £5m-a-year fashion retailer.

What I wanted was the analyst’s judgement, automated, scored, applied to the whole book. The hackathon was an excuse to find out whether that was possible in a day.

What I built

Two pieces, stitched together.

The first piece converted a merchant’s website into something a language model could read. I used Jina AI’s reader Application Programming Interface (API), which accepts a Universal Resource Locator (URL) and returns clean markdown of the page content without the usual scaffolding (navigation, cookie banners, ads). Cheap, fast, and good enough for fraud analysis. One call per merchant homepage, plus a handful of common internal paths (/about, /contact, /terms, /privacy).

The second piece was Claude reading that markdown with a structured prompt. The prompt described what a merchant-risk analyst looks for and asked for specific, bounded outputs. Catalogue size band (1-10, 10-100, 100-1000, 1000+). Contact email type (corporate domain, free provider, missing). Compliance pages present (terms, privacy, refund policy, each as yes or no). Site depth (number of internal links resolved). Template versus custom build (one of: clearly-template, likely-template, unclear, likely-custom). A few more. Nothing creative, nothing open-ended. The analyst’s own mental checklist, forced into structured output.

I ran it across the portfolio using a small Python loop. One worker per core on my laptop, rate-limited against Jina, parallel over a list of domains. The model calls were the expensive part per merchant and the cheap part in aggregate because the queries were short, the inputs were short, and the outputs were short. By 4pm the system had done 30,000 sites. The cost was a few pounds in AI tokens.

The output was a table. One row per merchant, one column per signal. That table went straight into the bigger model the team had been building, as features.

Why it mattered to me

Two things.

First, the output was useful on its own. Not as a final risk score. As a shortlist of merchants whose websites had the analyst-visible tells of something not being right, which a human could then look at in five minutes rather than twenty. That shortlist compressed a week of manual triage into an afternoon. It found cases that the transactional signals alone had not surfaced, because some of the failure modes are ones that only show up once you look at the website. That was not a speculative value; it was a concrete output the fraud team could act on the next morning.

Second, and this is the part that stuck with me: I built it alone, in one day, with no prior intention of writing code. That is not a flex. It is an observation. The work that would have taken the engineering team several weeks (scoping, specification, handover, build, quality assurance, deployment) I did in an afternoon by talking to a coding assistant and iterating against real outputs. The subject-matter expert and the builder were the same person. No translation layer. No specification drift. No “we built what you asked for, not what you wanted” after three sprints.

I do not want to overstate this. The code was not production code. It was scripts on a laptop, held together with duct tape and optimism. It would have needed a proper rewrite by engineers before being deployed anywhere near regulated infrastructure. But the insight the pipeline produced, the shape of the feature set, the proof that the idea worked, was all there. The scripts were an artefact. The understanding they produced was the real output.

What I called it then and what I call it here

Internally the tool had a name. I am not going to use it here, because it is a live piece of risk infrastructure. For the purposes of this site I will call it AutoAgent. There is no public repository. Anyone wanting to build the same thing for their own portfolio can follow the description above and produce something equivalent in a day. The patterns are small and generic. The value comes from applying them to a specific problem you already understand.

What it made me change

I do not think I would have spent the next two months building everything else on this site without the hackathon. Before it, I believed AI was useful for tasks. After it, I believed AI was useful for systems, and that the binding constraint on personal productivity was no longer my willingness to specify the work but my willingness to build the operating environment around the work. Memory. Context. Skills. Hooks. Safety. Every post on this site is downstream of that shift.

There is a post on this site about what it means to be AI-native. That post is the thesis. This post is the moment I realised it was true.

11 February 2026. One day, one pipeline, 30,000 websites. The workplace and tool are both kept generic; the counts and the dates are not.