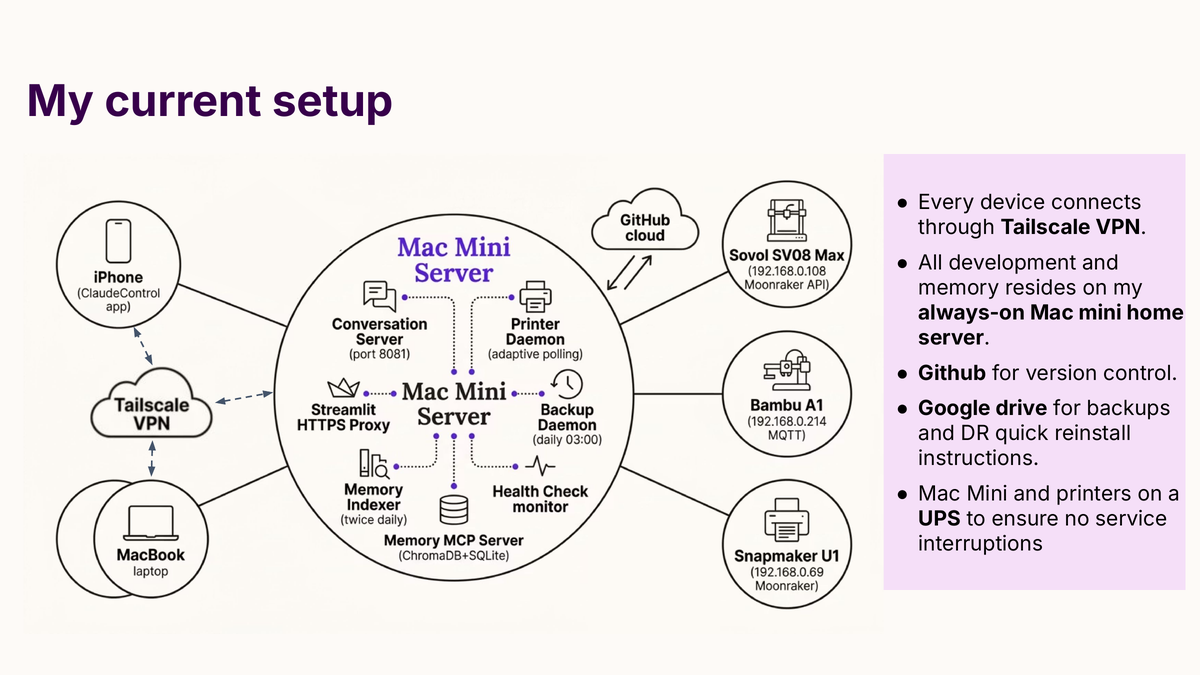

The Mac Mini on the shelf runs about fifteen always-on services and hosts most of what is described on this site. If it dies, the questions that matter are: what can I recover, how fast, and with what fidelity? I have written the answer down as a runbook and I test the steps periodically. The recovery time objective is roughly one hour from a dead disk to a fully verified system. This post is the worked version of how that is possible for a one-person setup, and a specific accounting of the gaps I have chosen to accept.

What is actually at risk

Seven layers can fail independently. The recovery for each is different. This is the taxonomy I use:

- Mac Mini disk loss. Full hardware rebuild or disk replacement.

- Google account compromise or lockout. The backup store is no longer reachable.

- GitHub account compromise. The primary code store is no longer reachable.

- Single Google OAuth token loss. Backups and governors’ app stop working, but the Google account itself is fine.

- Printer in an unsafe state. Covered in detail in a companion post.

- Memory corruption. The topic files, vector store, or keyword index has been damaged.

- Conversation server outage. The daemon that brokers mobile access has stopped.

None of these is theoretical. Five of the seven have happened at least once on my setup. The runbook has specific procedures for each; the rest of this post covers the ones that shape the overall architecture.

What gets backed up

Everything that is authoritative and cannot be cheaply regenerated is backed up. Everything that is derivable or replaceable is not. This is a discipline; the list of intentional exclusions is as important as the list of inclusions.

The daily backup, run at 03:00 by a LaunchAgent against Google Drive, captures these sets:

- Code. All Python, shell, YAML Ain’t Markup Language (YAML), JavaScript Object Notation (JSON), markdown, TOML and signing key files at the top level of

~/code/, plus several subdirectories containing local-only state (OAuth tokens, Streamlit secrets, encrypted context). - Control plane. The full

tim-claude-controlplanerepository contents, including its YAML manifests, hooks, LaunchAgent plists, and scripts. This is redundant with GitHub; it is on Drive so that a simultaneous loss of GitHub and the Mac Mini is still recoverable. - Daemon scripts. Symlink-deployed daemon scripts under

~/.local/lib/and~/.local/bin/. - Claude Code configuration.

~/.claude/settings.json,~/.claude/keybindings.json. - LaunchAgent plists. Every

com.timtrailor.*.plistunder~/Library/LaunchAgents/. - Memory topic files for the laptop project. The Mac Mini project’s memory tree is a separate git repository pushed to GitHub, so it backs itself up independently.

The backup is differential by modification time and checksum. The first run is slow; subsequent runs upload only what has changed. The script maintains a manifest at ~/code/.backup_manifest.json so that restoring does not require re-reading every file.

The full backup set is currently about 3 gigabytes uncompressed. Google Drive’s 15-gigabyte free tier has been the operational budget; after some trimming in mid-April, the account has roughly 2 gigabytes of headroom. I re-check that quarterly.

What is deliberately not backed up

Six categories are excluded, each with a specific reason.

The school governors’ raw document corpus (roughly one gigabyte of PDFs and documents at ~/Desktop/school docs/). The source of truth is GovernorHub, a software-as-a-service platform that the federation uses as its document repository. A weekly LaunchAgent syncs from GovernorHub to the desktop. Backing up the raw documents to Drive would have consumed 94% of the backup volume and added no recovery capability, because the restore path would still be “re-download from GovernorHub”. I removed the raw documents from the backup set in April 2026. The processed, encrypted context used by the governors’ app (a 268-kilobyte blob) is backed up; the raw documents are not.

iOS application source trees. All five iOS applications (TerminalApp, ClaudeControl, GovernorsApp, PrinterPilot, and a shared SwiftUI framework) live in public or private GitHub repositories. Recovery is git clone. The Xcode build artefacts are large, regeneratable, and excluded.

The ChromaDB and FTS5 indices backing the memory system. These are derivable from the JSONL session transcripts via a rebuild script. They are large (around 500 megabytes) and regenerate in a few minutes, so they are excluded.

JSONL session transcripts. The raw conversation history. This is the category I have most carefully considered. The topic files (the authoritative memory tier) are backed up via the tim-memory git repository. The vector and keyword indices are regeneratable from the transcripts; they are regeneratable from the topic files alone in a degraded form. The transcripts themselves, the raw record of specific conversations, are not backed up. If the Mac Mini loses its disk, the conversation history is gone. I consider this an accepted gap: the facts survive (in topic files), the indices can be rebuilt (from topic files in a degraded form), and the operational system works. What is lost is the ability to search for specific phrasing of historical conversations. That is a real loss; it is not an operationally crippling loss. I have logged it explicitly in the runbook so that future-me reading it does not conclude the transcripts are protected when they are not.

iOS application signing certificates. Re-downloaded from Apple’s developer portal on rebuild.

Ngrok or Cloudflared tunnel tokens. Removed entirely in March 2026 in favour of Tailscale, which does not require persistent tunnel state.

The one-hour sequence

The rebuild is twelve ordered steps. The ones with a human decision are marked; the rest are mechanical.

-

Reinstall operating system and developer tooling. macOS from recovery, then Homebrew, then the Xcode command-line tools. This is the slowest step; it depends on internet speed more than anything else.

-

Install Tailscale and rejoin the tailnet. The Mac Mini’s Tailscale address is

100.126.253.40; the local-network address is192.168.0.172. Everything else downstream of this step requires network addressability from other devices on the tailnet. -

Generate a new Secure Shell (SSH) keypair. Add the public key to the

timtrailor-hashGitHub account so private repositories can be cloned. -

Clone the control plane repository first. This is the install orchestrator. Its

deploy.shdoes the rest of the setup. -

Clone the application repositories. Eight repositories: the mobile conversation server, the printer tools, the governors’ application, and five iOS application repositories.

-

Restore the memory tree. The memory git repository is separately managed under a dedicated SSH configuration. This step clones the repository into the location Claude Code expects.

-

Restore non-source artefacts from Google Drive. Credentials file, OAuth tokens, Slack configuration, Model Context Protocol (MCP) approval lists, the encrypted governors’ context, Apple Push Notification signing key, Transport Layer Security certificates. The backup script has a restore mode that takes a manifest and pulls each file from Drive.

-

Seed the macOS Keychain with secrets. The keychain is not in the Drive backup; this is deliberate. Each secret is added explicitly. The list is in the service manifest (

services.yaml) undersecrets:blocks. The source of each secret is either a printed backup code kept offline, or a regeneration from the upstream service (a fresh Anthropic Application Programming Interface (API) key, for instance, minted on login to the Anthropic console). -

Seed a file that contains the keychain password. A small file at

~/.keychain_passis required by a LaunchAgent that unlocks the keychain at boot, so that daemons running without an interactive login can read from it. The file ischmod 600. This is an accepted trade-off for a headless Mac Mini with physical security. -

Re-download the school governors’ documents from GovernorHub. The sync script runs against a cookie stored in keychain; the first run restores the local copy.

-

Run the atomic deployment script from the control plane repository. This installs the LaunchAgent plists, symlinks hooks, rules, subagents, and skills into

~/.claude/, runs the verification script, and if verification fails, it automatically rolls back to the previous state. Successful verification installs the full operating environment. -

Reboot. Verify. The verification suite is described in the next section.

The human decisions in this list are: steps 8 and 9 (which secrets to seed, whether the trade-off on ~/.keychain_pass is still acceptable), and the implicit decision at step 11 of whether to trust the automatic rollback. Everything else is mechanical.

Verifying the rebuild

Declaring recovery complete means passing an ordered sequence of smoke tests. Each test runs in seconds; the total is under five minutes.

- Hook checks and scenarios. The control plane’s

verify.shruns the hook integration tests and the pytest-based scenario suite. The count grows over time as I add cases; the line I trust is “0 failed”, not a fixed pass count. If a hook is silently broken, the scenarios suite catches it. - Health check.

health_check.py --onceruns every monitored service through its own probe. Printer reachability, daemon liveness, backup freshness, memory system integrity, and more. Expected state: everything green. - Acceptance tests.

acceptance_tests.pyruns a broader end-to-end check (41 tests today). Expected compliance: 100%. - Mobile readout. From my iPhone, open the ClaudeControl or TerminalApp iOS application. The home tab shows the printer status and the service-level health checks. Expected state: no red banners.

- End-to-end printer send. Send a small calibration cube to the Sovol SV08 Max. If the printer accepts the job without requiring a firmware restart (which would be blocked by the layered safety controls), the full toolchain from iOS application through the conversation server through the printer tools is working.

- Backup dry run.

backup_to_drive.py --dry-run. A successful run should produce a short upload list, indicating the differential logic is working against the restored manifest.

If any of these fails, the recovery is incomplete. I do not declare done; I investigate the failure and either fix it or roll back and try again.

Emergency access while the rebuild is in progress

The rebuild is not the only path back to a working system. If the Mac Mini is being rebuilt but I need emergency access to part of the infrastructure, several paths remain:

- The laptop. A partial mirror of

~/code/exists on the MacBook Pro. It can run the memory server and health check locally, so while the Mac Mini is down, the laptop can temporarily serve as the conversation server, memory host, and iOS application backend. The iOS applications have configurable backend addresses; switching from the Mac Mini’s Tailscale address (100.126.253.40) to the laptop’s (100.112.125.42) shifts the load. - The printers. Printer tools query Moonraker on the printer’s own local network address (

192.168.0.108for the SV08 Max). Any machine on the local network or the tailnet can drive the printer without the Mac Mini. An emergency pause command is a singlecurlagainst Moonraker. - GovernorHub sync. The sync script runs on any machine with Python 3.11 and the GovernorHub session cookie. If the Mac Mini is down but an inspection is imminent, the sync and the governors’ application both run on the laptop or a web-hosted version.

The architecture point here: no single daemon on the Mac Mini is a strict single point of failure. Each of the critical pieces has at least one fallback, and the fallbacks are documented in the runbook.

The parts I have actually tested

Writing a runbook and executing a runbook are different activities. Several parts of mine have been executed under real pressure, not just in dry runs.

Step 7, the restore of non-source artefacts. I have had to restore the credentials file and OAuth tokens twice, once after a botched rename and once after a configuration change that broke authentication. Both times the restore completed in under five minutes. The backup was fresh, the files were exactly what I expected, and nothing had drifted.

Step 11, the atomic deployment with rollback. I have triggered the rollback three times, always in development rather than recovery. Each time the rollback reverted the deployed state to the previous snapshot and left the system running on the old configuration. The discipline of treating ~/.claude/hooks/, the LaunchAgent plists, and the deployed configuration as outputs of the control plane, not things to edit directly, is what makes the rollback reliable.

Step 10, the GovernorHub sync. I tested this explicitly in April 2026 by deleting the local copy and running the sync cold. It took roughly eight minutes to re-download the full set. The governors’ application rebuilt its encrypted context from the restored documents without intervention.

The full rebuild. I have not done a full one-hour rebuild since building the runbook in this form. The components that make up the rebuild have been exercised in isolation, and the control plane’s verification suite is reliable enough that I believe the composition works. But the claim of “under an hour” is, until I execute a real rebuild end-to-end, a claim with a forward-looking component. I will update this post when I have done a cold run.

The gaps I have chosen

A runbook that claims complete protection is lying. Three gaps in mine are real.

First, the JSONL transcripts. As noted above, the conversation history is not backed up. The facts survive in topic files; the searchability does not. This is accepted.

Second, the keychain. The keychain itself is not in the backup. Secrets are seeded from printed backup codes kept offline, from upstream services (regenerated API keys), or from out-of-band channels. This is safer than backing the keychain up to Drive, but it means recovery requires access to those offline materials. If they are lost simultaneously with the Mac Mini, several secrets have to be regenerated from their upstream services. That is more work, not impossible work.

Third, the Drive backup itself depends on a Google account that is not encrypted at rest under a user-held key. The credentials file, the OAuth tokens, and the Transport Layer Security private key sit on Drive as plaintext. This is protected by two-factor authentication on the Google account and two account-recovery channels (my wife’s email and my phone). If all three are compromised simultaneously, the attacker has the backup. I have decided this is an acceptable trade-off for a single-operator setup; a more paranoid threat model would warrant envelope encryption before upload.

Each of these gaps is documented in the runbook. Every quarter I re-read them and check whether the decision to accept them is still correct.

What the runbook has taught me

Two things.

The first is that the difference between “system I could rebuild” and “system I have a procedure to rebuild” is a substantial piece of engineering work. Every ad-hoc secret I seeded from memory at some point had to become a keychain entry with a documented source. Every config file I had edited by hand at some point had to become either a file under control-plane management or an explicit entry in the restore list. Every dependency had to be named. The runbook exposed the gaps; closing the gaps was the actual work.

The second is that the runbook is useful even when nothing has failed. It is a complete, up-to-date map of the operational system. Reading through it periodically is the cheapest way I know to audit what I actually run. Several times during the writing and revising of the runbook I noticed services that were no longer needed, configuration files that were stale, and secrets that should have been rotated. The runbook is the inventory. The inventory is the hygiene.

Disaster recovery, at the scale of a one-person setup, is not primarily about protecting against disaster. It is about knowing, in specific detail, what you actually have. Once you know that, the recovery itself is a script.

The runbook lives at ~/code/DISASTER_RECOVERY.md on the Mac Mini and is backed up daily. It is version-controlled but also directly edited. The version dated 2026-04-19 is the current authoritative source. If this post and the runbook disagree, the runbook wins.