Most of my recent development has happened on my phone. Not because phones are good development environments. Because I am usually doing something else at the same time, and the something else needs me present. Watching my kids in a playground. Sitting on the edge of a pool during swimming lessons. Standing at a school gate. Waiting for an appointment. The hours I once lost to low-density parenting I now spend iterating on the infrastructure this site describes, from my phone, talking to an agent that runs on a Mac Mini at home.

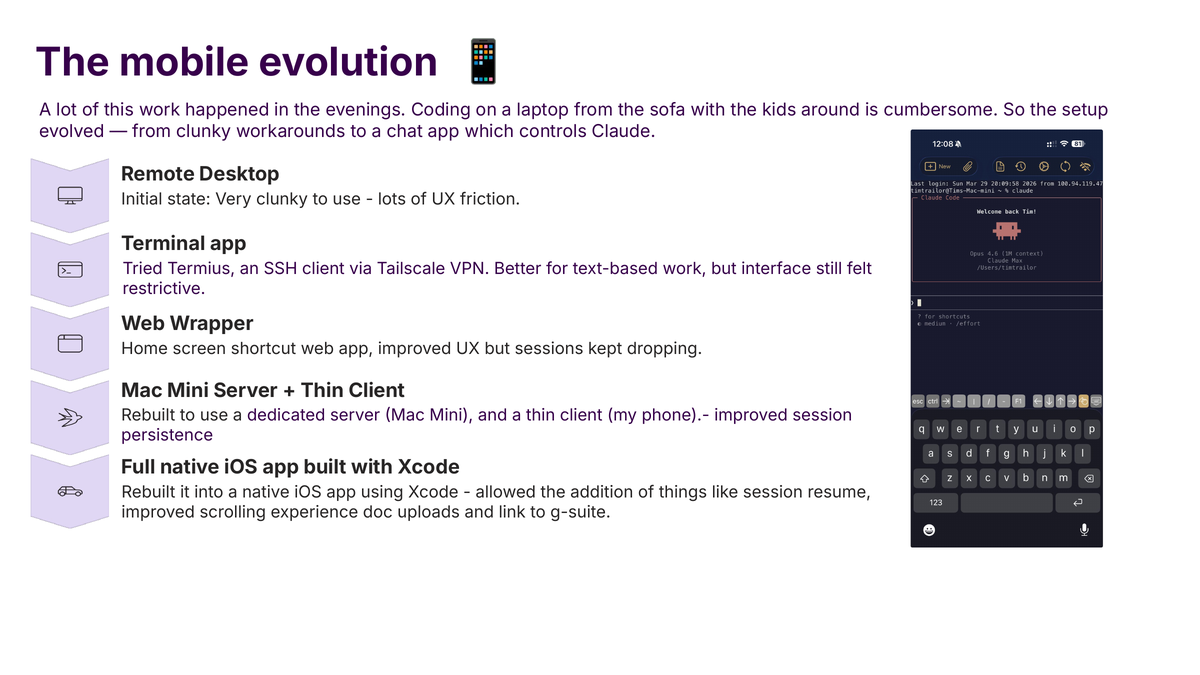

The phone-to-Mac-Mini bridge has gone through five distinct shapes. Each one replaced the previous one because the previous one had become the binding constraint. I am writing them down in order because the trajectory is informative.

Stage 1: Remote desktop

The first version was a remote-desktop client, which drew my Mac Mini’s screen pixel-by-pixel onto my iPhone. This technically worked. In practice it was unusable. The screen of a Mac rendered at phone resolution is illegible. The keyboard input is a mess because the iOS keyboard and macOS keyboard do not share semantics; modifiers, arrow keys, and the command key all misbehaved. Even if I could see and type, any non-trivial action meant a minute of pinch-zooming and tapping. Ten minutes of this was tolerable; an hour was not.

The useful thing I learned from stage 1 was that remote desktop is the wrong abstraction. I did not want my Mac Mini’s screen on my phone. I wanted the capability, not the interface.

Stage 2: Secure Shell terminal applications

The next step was dropping the graphical layer entirely. I installed a Secure Shell (SSH) client on the phone, connected to the Mac Mini over Tailscale (a peer-to-peer virtual private network mesh that makes my devices reachable from anywhere without me having to configure anything), and ran the coding assistant in a terminal.

This was a substantial jump. SSH is a text-mode protocol designed for precisely this case: low bandwidth, high reliability, human readable. The phone keyboard became tolerable because the interface was now plain text. I could scroll. I could search. I could copy output. The limiting factor shifted from pixel density to connection resilience: every time the phone changed network (cellular to Wi-Fi, Wi-Fi to another Wi-Fi, any brief cell blackout) the SSH session would drop and I would lose wherever I was.

Mosh (a replacement SSH client designed for exactly this problem) fixed most of the session-resilience issue. With Mosh, walking out of the house mid-session and switching to cellular kept the session alive. That was the first version that worked well enough for real work rather than checking-in.

Stage 3: Web wrapper

Stage 3 was a Flask application running on the Mac Mini that exposed the coding assistant over the web. The phone would open the web page; the page would render the current session; I could type into it from any browser, without needing a separate SSH app installed. It served a useful purpose for about six weeks.

The reason I moved past it was that web-page sessions are not terminals. Rendering tool outputs, colour codes, structured responses, and complex formatting in a browser without it feeling like a bad terminal is a real engineering project, and I was not in the business of building a bad terminal. The web wrapper also did not handle state well: if the phone locked and unlocked, the connection to the web page frequently stalled, and the tab needed refreshing.

The web wrapper is still there, partly because occasionally I want to log in from a browser I do not own (on a laptop at a client site, for instance). But it stopped being the primary path for daily use.

Stage 4: Thin-client iOS application

Stage 4 was a native iOS application built in Swift, running on the phone, connecting to the Mac Mini over Tailscale, rendering the session natively rather than through a browser. This is the stage I was in for most of the time I was building the systems described elsewhere on this site. A terminal that behaves like a terminal, but a terminal that looks like an iOS application: big touch-friendly buttons, keyboard management that respects the software keyboard, no browser getting in the way.

Push notifications became possible at this stage, because a native application can register for Apple Push Notification service (APNs) and receive background pushes. That is how the system tells me when a long-running task has finished, when a health check fails, when a printer needs attention. Before this stage, the system could not interrupt me; at stage 4, it could.

The iOS application is open source at github.com/timtrailor-hash/ClaudeCode. It has no hardcoded secrets and no personal configuration. Someone with their own Mac Mini and a Tailscale account could use it as-is.

Stage 5: Claude Remote-Control

Stage 5 is the one I expect to replace stage 4 over time. Claude Code now has an official remote-control mode, which exposes a session to a web-based front-end at claude.ai/code. The implementation is Anthropic’s; I do not need to maintain my own iOS application or web wrapper. It is faster, more reliable, and better integrated with the tools than my own build was.

I still have the iOS application installed because (a) it supports push notifications directly, (b) it is more responsive to the specific conventions of my own setup (hooks, skills, memory), and (c) I have not yet finished migrating. Over the next few months I expect the iOS application to become a notification shell and an occasional fallback, with Remote-Control as the daily driver.

The useful observation

The five stages are not the interesting part. The interesting part is what I would otherwise have not built.

Every system on this site exists because I had time to build it. I do not have time that is not also time with my kids or time asleep. Building from my phone is the only reason the memory system, the hooks, the safety layers, the skills, the automation, and the iOS apps exist. Had the binding constraint stayed at “I can only build at my desk”, the list of things that would never have been built is most of them.

I am wary of reading too much into this. Plenty of work genuinely needs a real keyboard, a real screen, and a real chair. Code that touches hardware, code that requires deep concentration, code that needs careful visual review. For that work I still use the laptop or the Mac Mini itself. But the line between “I need a real computer” and “I need to make a judgement call and move on” has moved, and an increasing share of the work I do is the latter.

The practical advice, if someone with young children and an interest in this kind of work is reading, is to skip stage 1 and go straight to stage 4 or 5. Mosh on a terminal application is better than nothing. A native client is better than Mosh. Claude Remote-Control is better than a native client. The investment in setting any of them up is an afternoon.

The rest of your life does not pause while you build. It does not have to.

Tailscale IPs for the devices in my setup are in the main post; the iOS app source is public but it is not the point. The point is that the phone is the limiting factor only until it is not.